Stephen T Asma writes: After you spend time with wild animals in the primal ecosystem where our big brains first grew, you have to chuckle a bit at the reigning view of the mind as a computer. Most cognitive scientists, from the logician Alan Turing to the psychologist James Lloyd McClelland, have been narrowly focused on linguistic thought, ignoring the whole embodied organism. They see the mind as a Boolean algebra binary system of 1 or 0, ‘on’ or ‘off’. This has been methodologically useful, and certainly productive for the artifical intelligence we use in our digital technology, but it merely mimics the biological mind. Computer ‘intelligence’ might be impressive, but it is an impersonation of biological intelligence. The ‘wet’ biological mind is embodied in the squishy, organic machinery of our emotional systems — where action-patterns are triggered when chemical cascades cross volumetric tipping points.

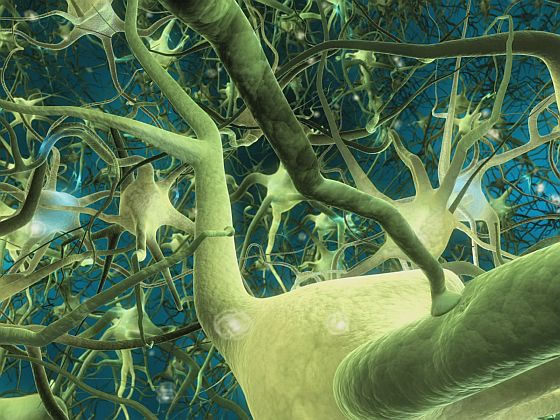

Neuroscience has begun to correct the computational model by showing how our rational, linguistic mind depends on the ancient limbic brain, where emotions hold sway and social skills dominate. In fact, the cognitive mind works only when emotions preferentially tilt our deliberations. The neuroscientist Antonio Damasio worked with patients who had damage in the communication system between the cognitive and emotional brain. The subjects could compute all the informational aspects of a decision in detail, but they couldn’t actually commit to anything. Without clear limbic values (that is, feelings), Damasio’s patients couldn’t decide their own social calendars, prioritise jobs at work, or even make decisions in their own best interest. Our rational mind is truly embodied, and without this emotional embodiment we have no preferences. In order for our minds to go beyond syntax to semantics, we need feelings. And our ancestral minds were rich in feelings before they were adept in computations.

Our neo-cortex mushroomed to its current size less than one million years ago. That’s a very recent development when we remember that the human clade or group broke off from the great apes in Africa 7 million years ago. That future-looking, tool-wielding, symbol-juggling cortex grew on top of the limbic system. Older still is the reptile brain — the storehouse of innate motivational instincts such as pain-avoidance, exploration, hunger, lust, aggression and so on. Walking around (very carefully) on the Serengeti is like visiting the nursery of our own mind. [Continue reading…]